Google's DeepMind Is Developing An AI Kill Switch To Prevent A Skynet Apocalypse

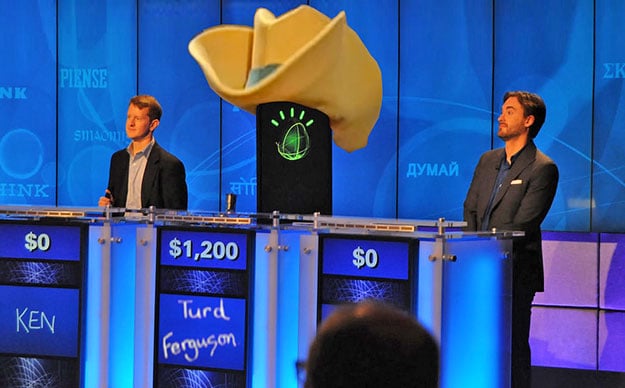

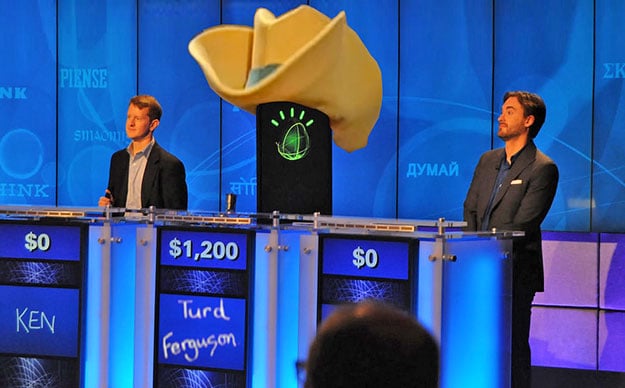

When Jeopardy game show champion Ken Jennings made light of the beating he took at the hand of IBM's Watson supercomputer, by noting "I for one welcome our new computer overlords," not many at the time took Ken's musing for much more than a light-hearted pat on the back for IBM's big data analytics and AI ambassador. Since then, a lot has changed and advances in artificial intelligence and deep learning machines are beginning to do amazing things, from autonomous vehicles to near-human language comprehension, to AI assistants that blow Siri clean out of the water.

And with big, powerful companies like Google, Microsoft, Facebook, IBM and NVIDIA all racing to develop AI hardware, software and algorithms to address real-world needs and market demand, it's now commonly thought that one day soon AI could very well be much more intelligent than humans. As Elon Musk recently expressed concern--in the wrong hands or gone rogue--an AI agent could be a very real threat, and not just on game shows. It's the kind of thing that keeps him up at night and it's not just something of science fiction anymore.

IBM's Watson sporting at least a 25 gallon cowboy hat, on Jeopardy - Credit: flickr.com/charliecurve

And with big, powerful companies like Google, Microsoft, Facebook, IBM and NVIDIA all racing to develop AI hardware, software and algorithms to address real-world needs and market demand, it's now commonly thought that one day soon AI could very well be much more intelligent than humans. As Elon Musk recently expressed concern--in the wrong hands or gone rogue--an AI agent could be a very real threat, and not just on game shows. It's the kind of thing that keeps him up at night and it's not just something of science fiction anymore.

IBM's Watson sporting at least a 25 gallon cowboy hat, on Jeopardy - Credit: flickr.com/charliecurve

Fortunately, some of the major players are actually also working on systems and methods to help maintain control of super-intelligent AI agents. In fact, a team of researchers at Google-owned DeepMind (the team that built Alpha Go, the machine that beat Lee Sedol handily) , along with University of Oxford scientists, are developing a proverbial kill switch of sorts for AI. Google acquired artificial intelligence startup DeepMind back in 2014 for $580 million or so, and back in the day Google CEO Eric Schmidt called it "an important bet." Together with U. Oxford, the team has released a paper entitled "Safely Interruptible Agents."

The paper details the following in abstract: "Reinforcement learning agents interacting with a complex environment like the real world are unlikely to behave optimally all the time. If such an agent is operating in real-time under human supervision, now and then it may be necessary for a human operator to press the big red button to prevent the agent from continuing a harmful sequence of actions—harmful either for the agent or for the environment—and lead the agent into a safer situation."

Future of Humanity Institute, University of Oxford Philosopher - Stuart Armstrong

The paper details the following in abstract: "Reinforcement learning agents interacting with a complex environment like the real world are unlikely to behave optimally all the time. If such an agent is operating in real-time under human supervision, now and then it may be necessary for a human operator to press the big red button to prevent the agent from continuing a harmful sequence of actions—harmful either for the agent or for the environment—and lead the agent into a safer situation."

Future of Humanity Institute, University of Oxford Philosopher - Stuart Armstrong

So whether or not you buy into the possibility that one day AI could be so powerful that we're faced with a proverbial Skynet apocalypse, there are some rather smart folks out there that are working in this space that believe it's a very real threat that we need to get ahead of and mitigate first and foremost. Other technologists and philosophers like Nick Bostrom have underscored this as a critical first step as well.

The Safely Interruptible Agents research paper goes on to note: "...if the learning agent expects to receive rewards from this sequence [button press], it may learn in the long run to avoid such interruptions, for example by disabling the red button—which is an undesirable outcome. This paper explores a way to make sure a learning agent will not learn to prevent (or seek!) being interrupted by the environment or a human operator."

Sounds like good science to us. If we're developing an all-powerful AI, we better well develop one that doesn't mind being shut down, if we so desire. And it better be programmed to also not reprogram itself to not shut down when we hit that red button. That's the short, laymen's term version for ya. You go with that Stuart and team DeepMind.

The Safely Interruptible Agents research paper goes on to note: "...if the learning agent expects to receive rewards from this sequence [button press], it may learn in the long run to avoid such interruptions, for example by disabling the red button—which is an undesirable outcome. This paper explores a way to make sure a learning agent will not learn to prevent (or seek!) being interrupted by the environment or a human operator."

Sounds like good science to us. If we're developing an all-powerful AI, we better well develop one that doesn't mind being shut down, if we so desire. And it better be programmed to also not reprogram itself to not shut down when we hit that red button. That's the short, laymen's term version for ya. You go with that Stuart and team DeepMind.