Intel 3rd Gen Xeon Scalable Launched: 10nm Ice Lake-SP To Supercharge Data Centers

Intel 3rd Gen Xeon Scalable Processors: 10nm Ice Lake-SP For Data Centers

Intel Ice Lake-SP Details

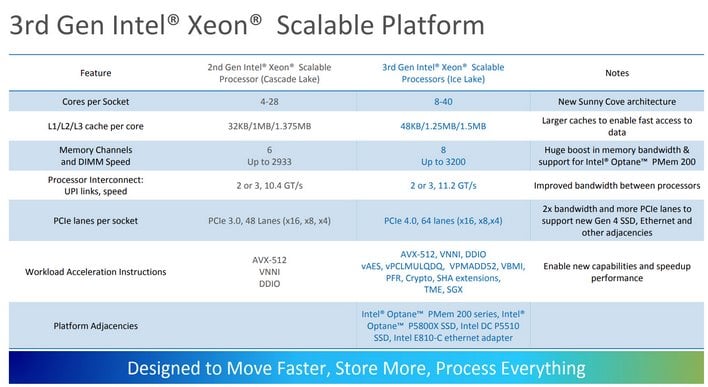

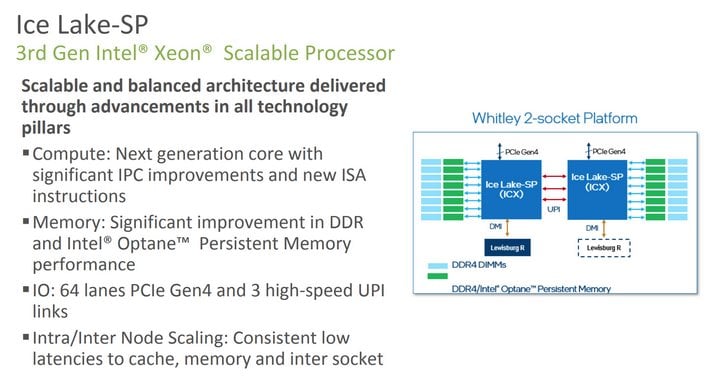

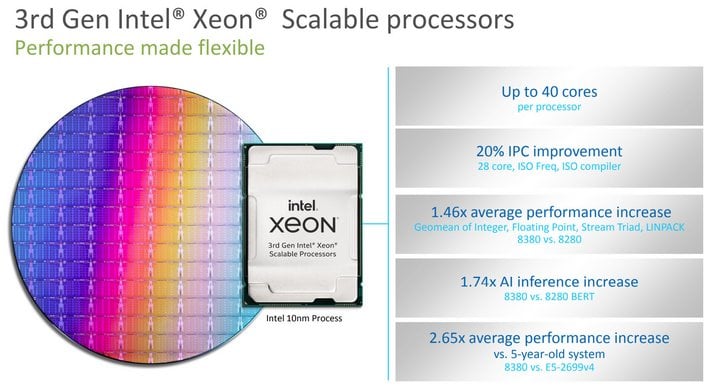

At the foundation of Intel’s 3rd Gen Intel Xeon Scalable processors is the Ice Lake-SP microarchitecture. Fundamentally, Ice Lake-SP is similar to the previously launched, lower-power Ice Lake (non-SP), which debuted a couple of years back. However, it is beefed up and augmented to address the needs of modern data centers, the cloud, and edge computing workloads.Most of the IP in Ice Lake-SP has been updated and enhanced over previous-gen architectures to improve performance or efficiency. Ice Lake-SP is built using Intel’s latest transistor technology. A newer core microarchitecture with a ~20% IPC improvement -- dubbed Sunny Cove -- is employed in the CPUs, and additional instructions are available to accelerate a variety of burgeoning workloads.

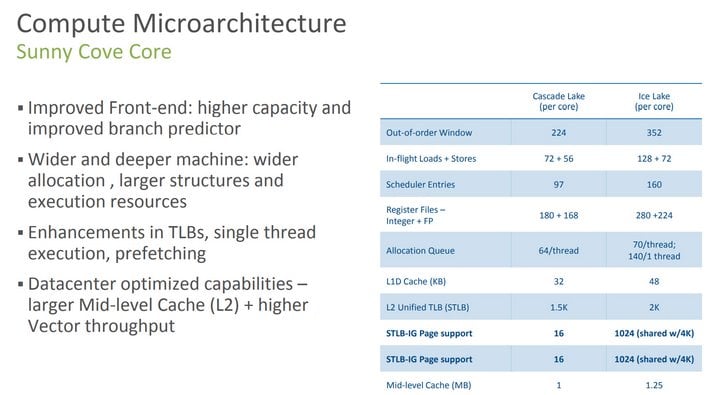

Sunny Cove is wider and deeper than Intel’s previous-generation core microarchitectures. It has larger key internal structures and larger caches, to not only help improve IPC, but overall multi-core scaling and performance as well. There are now 5 allocation units, 10 execution ports, 4 AGUs, and more L1 store bandwidth per core. Sunny Cove also features a more accurate branch prediction unit, among a number of other tweaks.

In comparison to the older Haswell and Skylake microarchitectures, Ice Lake and its Sunny Cove core are enhanced virtually across the board. In addition to the aforementioned features, Sunny Cove has more L1 and L2 cache per core, and a larger Translation Lookaside Buffer (TLB). The architecture's uOP (micro-op) cache is larger as well, it has a larger Out Of Order window, and it can handle many more scheduler entries and in-flight loads and stores.

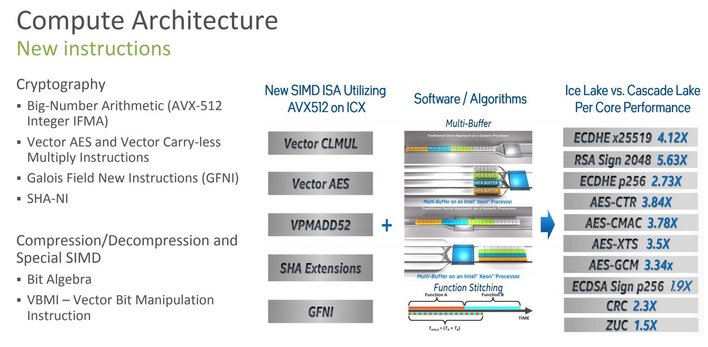

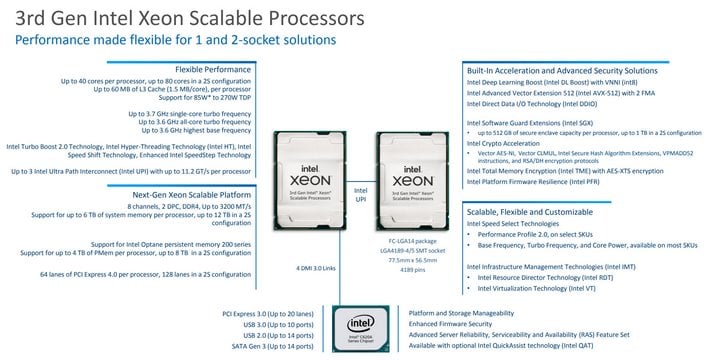

In addition to the wider and deeper aspects of Sunny Cove, the design has some new capabilities as well. It has two FMA units (1 x 512 and 1 x 256), and new instructions for accelerating crypto (Intel Crypto Acceleration), big number arithmetic (IFMA), Vector AES, Vector Carryless Multiply, and SHA encryption. According to Intel, its Crypto Acceleration tech offers particularly strong performance across a variety of cryptographic algorithms, which are important to customers that run encryption-intensive workloads; for example, online retailers that process millions of customer transactions per day. Intel claims its Crypto Acceleration can protect customer data without impacting response times or overall system performance. Some of the per-core performance improvements versus the previous-gen Cascade Lake are outlined in the slide above. As you can see, for some workloads, Ice Lake can be many times faster.

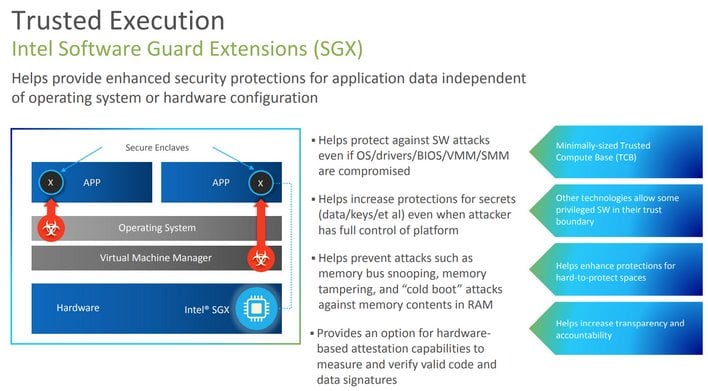

Ice Lake-SP also features Deep Learning Boost (DL Boost) to accelerate many AI workloads and it incorporates additional security features as well, like Intel Software Guard Extension (Intel SGX), which offers up to 512GB of secure enclave capacity per processor (or up to 1TB in a 2P configuration).

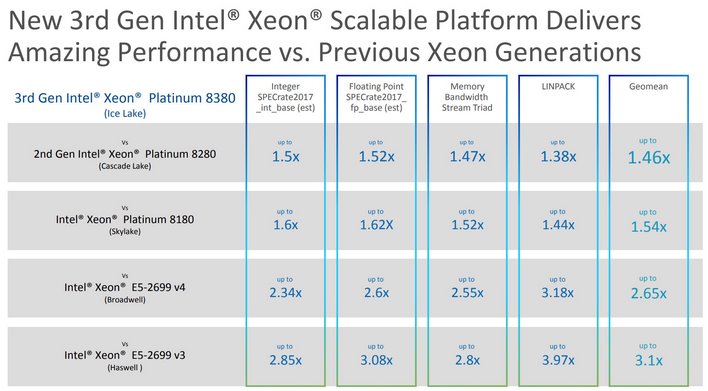

More Compute, Lower Latency, More Bandwidth

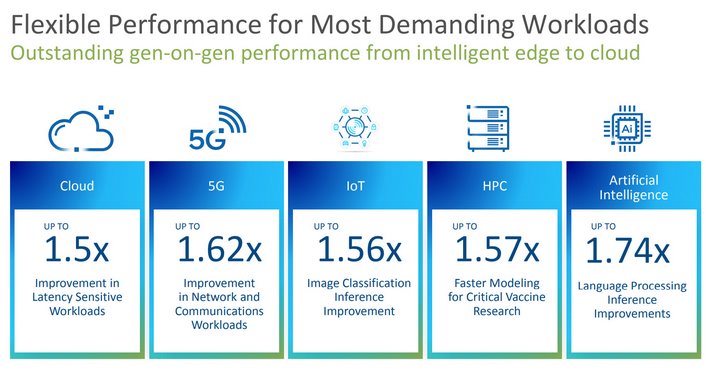

All told, Intel’s 3rd Gen Intel Xeon Scalable processors offer more cores, additional memory capacity and bandwidth, and more intra- and inter-processor bandwidth. Intel is claiming a 1.46X average performance increase over previous-gen products for traditional compute workloads and a 1.74x improvement for AI Inference in BERT (Bidirectional Encoder Representations from Transformers) workloads, comparing the new 3rd Gen Xeon 8380 versus a previous-gen 8280. And versus a 5-year-old platform, Intel is claiming a 2.65x average performance increase.

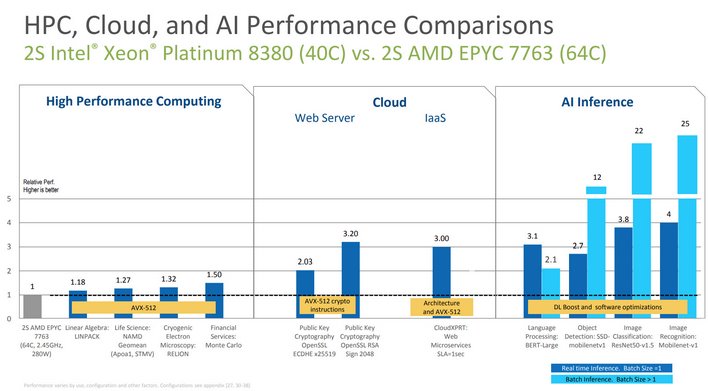

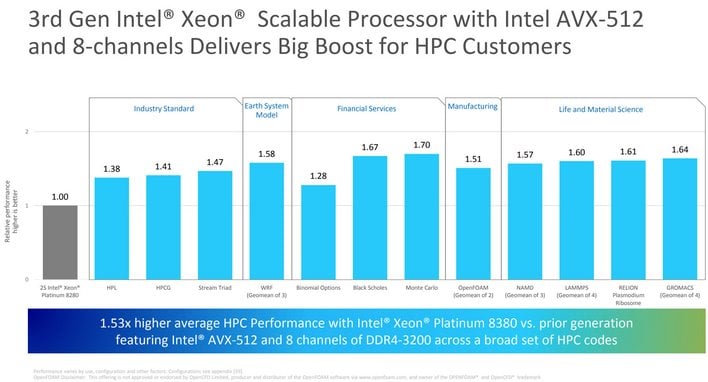

Intel provided a host of performance data for its new 3rd Gen Xeon Scalable processors, comparing them to both previous-gen Intel offerings and AMD's competing EPYC platform. A plethora of comparisons were may in Intel's presentation, but the handful of slides above are a good summary of the Intel vs. Intel comparisons. Across Cloud, HPC, 5G, IoT, and AI workloads, the new 3rd Gen Xeon Scalable processors show significant uplifts across the board. And in more traditional compute and memory-related workloads, versus the prior four generations of Xeon platforms, the new 3rd Gen Xeon Scalable processors seem just as impressive. And Intel customers coming from a Xeon E5-series product are likely to see significant performance improvements, along with the higher density and other platform enhancements as well.

Versus rival AMD's EPYC platform, Intel is also claiming many victories, despite a monumental, up to 24-core per processor deficit. 3rd Gen Xeon Scalable processors top out at 40 cores, whereas AMD's EPYC series can have up to 64 cores. In standard compute workloads, that don't leverage any special instructions, 3rd Gen Xeon Scalable processors will not be able to overtake higher core-count EPYC processors in most circumstances, socket vs. socket. However, when AVX-512, new crypto instructions, or DL Boost are added to the equation (in conjunction with Intel's software optimizations), Intel is claiming some major performance advantages over AMD, especially in Cloud and AI Inference workloads.

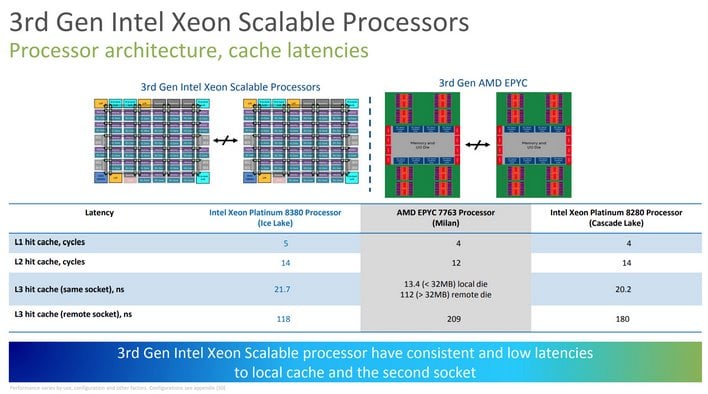

Due to architectural efficiencies in Intel's monolithic design and enhancements Ice Lake-SP / Sunny Cove, Intel's 3rd Gen Xeon Scalable processors may offer significantly lower latencies in some situations as well. Dissecting L1 - L2 - L3 cache hits highlights one of the disadvantages to AMD's chiplet-based design. When remaining within a single, local die, AMD has a latency advantage versus both 2nd and 3rd Xeon Scalable processors. But once data has to be fetched from a remote die, cache latency with EPYC can be ~2x - ~5x higher. With the massive amounts of cache on EPYC though, this isn't going to be a major concern for the overwhelming majority of workloads, but with massive datasets Intel's architecture may have an advantage in this regard in some corner cases.

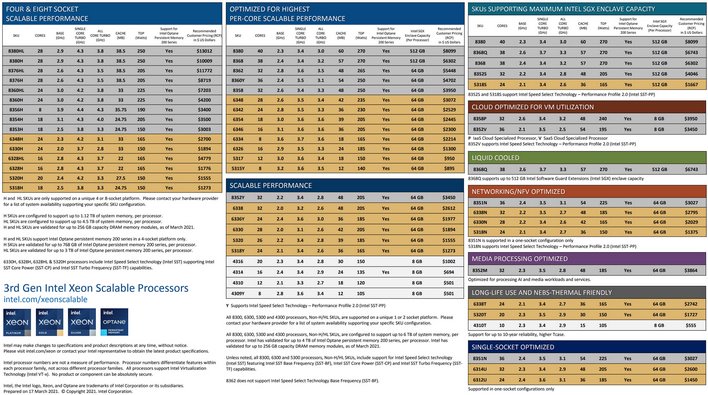

3rd Gen Xeon Scalable Processor Line-Up

There are a plethora of 3rd Gen Intel Xeon Scalable processors coming down the pipeline. They are broken down into many categories in the table above, but note that the same model may be listed in multiple sections, and that the 4-8S options are actually based on Cooper Lake. Core counts in the line-up range from 8 - 40 cores per processor, with up to 60MB of cache, and support for up to 8 memory channels at speeds up to DDR4-3200 per socket, for up to 6TB of total system memory (factoring in DRAM and Persistent Memory) . All of the processors support PCI Express Gen 4, with up to 64 lanes connectivity, and they all support Intel's Optane Persistent memory technology as well. TDPs vary depending on the maximum base and boost frequencies and core count / configuration (up to a 270W TDP), and the letters in the model numbers designate the maximum number of sockets supported. 3rd Gen Intel Xeon Scalable processors feature up to 3 UPI links between processors, at speeds up to 11.2 GT/s (vs. 10.4 GT/s in 2nd Gen Xeons), and they can support up to 8 sockets -- though in configurations above 2P, the maximum core count per processor is 28, because they are based on Cooper Lake. 40-core 3rd Gen Intel Xeon Scalable processors are available in 1P and 2P configurations only.

In terms of pricing, 3rd Gen Intel Xeon Scalable processors top out at just above $13K, with the flagship 40-core Xeon Scalable 8380 arriving at $8,099. While big-iron Xeon processors for mission-critical applications always command a premium, the 3rd Gen Intel Xeon Scalable 8380's introductory price is thousands less than the previous-gen 8280, which was released in 2019.

Intel is also quick to point out that data center platforms are not about the processors alone. There are many adjacent products and software featured in Intel's total platform solution. Intel’s 3rd Gen Intel Xeon Scalable platform includes support for Intel Optane persistent memory 200 series, Intel’s latest Optane Solid State Drive P5800X and D5-P5316 NAND SSDs, as well as Intel Ethernet 800 Series Network Adapters and Intel Agilex FPGAs. Intel has also made significant investments in software to optimize it platforms. And its the top-to-bottom solution that has helped Intel's maintain a leadership position in data centers, despite intense competition from multiple vectors.

All told, although the 10nm Ice Lake-SP based 3rd Gen Intel Xeon Scalable processors are arriving later than Intel had initially hoped, they are a major step forward for the company and its customers. The enhancements arriving with the platform should result in significant increases in performance and density for Intel's customers, with additional capabilities and features as well.