NVIDIA Jetson AGX Orin Dev-Kit Eval: Inside An AI Robot Brain

NVIDIA Jetson AGX Orin: Working With The Dev Kit And Conclusions

Now that we've taken a tour of the tools bundled with the developer kit, it's time to think about practical applications. What will developers do with the Jetson AGX Orin? NVIDIA gave us some clues in the system's bundled accessories, for sure. The USB headset and 720p webcam will certainly serve developers well when it comes to implementing models and applications for visual and audio learning. While there's a ton of functionality built into the Jetpack toolkit, the company also has this handy video that covers a some interesting use cases for Jetson hardware.

The protagonist of this story is Orion, an autonomous guide robot that takes a visitor to his conference room. Using a combination of onboard cameras and sensors along with video streamed from cameras throughout the building, it can detect where there's a crowd and plan an optimized route to avoid congestion all while navigating obstacles like glass doors and people. NVIDIA says all of that is happening right inside the bot's hardware running on RobotOS (ROS).

Along with more novel implementations like Orion, NVIDIA says that Jetson AGX Orin will be deployed in just about every sector. Autonomous robots will apparently find their way into huge companies like John Deere, Medtronic, and Komatsu along with cloud compute platforms like Amazon Web Services and Microsoft Azure. For their part, AWS and Azure both have NVIDIA's A100 platform available for cloud AI tasks like training models and inference.

Incidentally, calling back to our ASR demo on the previous page, Orion is a good example of what NVIDIA strives for. This demo was likely scripted out well in advance, but a robot is going to have to interpret that same kind of conversation across every visitor to a complex. Each visitor can say things differently -- accents are just part of the equations, along with sentence structure and word choices -- and the AI needs to be able to infer what matters and discard what's unimportant.

Jetson AGX Orin: Tons of Performance

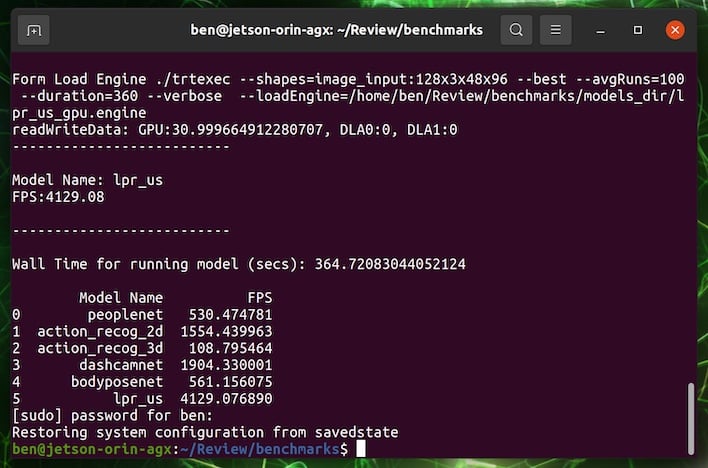

Next up is a pure performance test. The Vision Benchmark tests the performance of six different visual models to see how fast each can process data individually. The tricky thing about benchmarks like these is that robots that rely on these models run multiples simultaneously. The robots need to navigate by recognizing landmarks, identify people, work around obstacles, and plan their actions all at the same time. That means that even though some of these results look really fast, bear in mind that they won't have full access to all of the Orin's resources in a normal scenario.

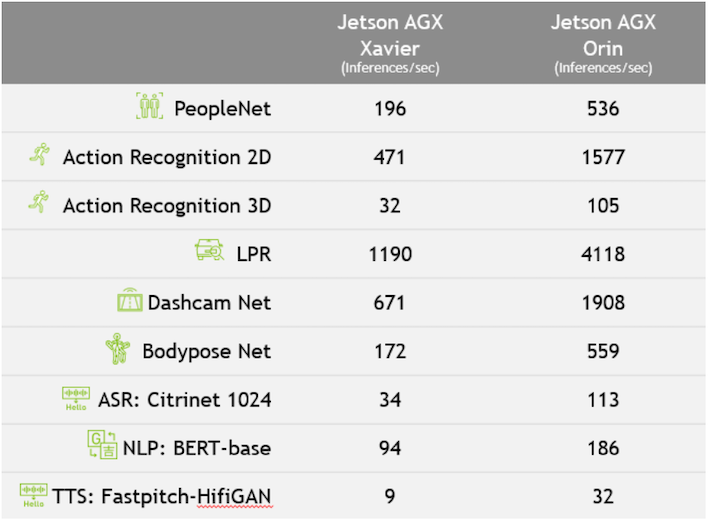

The benchmark uses the Jetson's configurable thermal budget at the maximum of 60 Watts, and as we'll discuss shortly, it's way out in front of the previous generation. While these benchmarks may not really show it in isolation, NVIDIA says the big Ampere-based GPU is a huge step up over the previous Jetson AGX Xavier. In fact, the company claims Orin is capable of eight times the compute performance of Xavier, and it's twice as energy efficient. Xavier isn't exactly an ancient platform these days, having first been released in early 2019, but those numbers also somewhat expose the fact that Orin has a much bigger thermal budget at the top end. Jetson AGX Orin has the same pin-out and footprint as its predecessor too, so swapping modules to fit the newer platform should be simple.

The benchmark uses the Jetson's configurable thermal budget at the maximum of 60 Watts, and as we'll discuss shortly, it's way out in front of the previous generation. While these benchmarks may not really show it in isolation, NVIDIA says the big Ampere-based GPU is a huge step up over the previous Jetson AGX Xavier. In fact, the company claims Orin is capable of eight times the compute performance of Xavier, and it's twice as energy efficient. Xavier isn't exactly an ancient platform these days, having first been released in early 2019, but those numbers also somewhat expose the fact that Orin has a much bigger thermal budget at the top end. Jetson AGX Orin has the same pin-out and footprint as its predecessor too, so swapping modules to fit the newer platform should be simple.

Jetson AGX Orin obviously boasts a lot of compute horsepower for a single AI platform, but it's got a relatively high 60 Watt power budget. However, that power budget is configurable by developers based on the needs of the application. The TDP can be configured all the way down to 15 Watts, same as Xavier, and at that point NVIDIA says it's still twice as fast as its predecessor. Speaking of performance, let's take a look at NVIDIA's own internal numbers for the Xavier against the updated Orin.

Prior to Orin's release, NVIDIA claimed the top spot among AI edge accelerators in MLPerf with Jetson AGX Xavier, where it sat uncontested for its lifetime. An edge accelerator is a non-server application of an AI compute platform, like a service robot. This new one in the Orin is pretty darned fast in comparison to its Xavier predecessor. And because Jetson AGX Orin is faster than its forebear, then it should take the top spot, and NVIDIA announced on April 6 that that was indeed the case. NVIDIA's performance claims range anywhere from more than twice as fast with the PeopleNet person detection AI model to nearly 3.5 times as fast in LPR, which is used in autonomous vehicles.

The more data a platform can process in real-time, the more sensors it can handle at once. That's why in NVIDIA's demo, Orion could look at multiple streaming camera feeds as well as its onboard sensors, calculate routes, and navigate the building with on-device hardware. In the real world, that means more cameras and sensors (including LiDAR) for safer autonomous vehicles, faster speech recognition for conversational AI, and predicting where people are going while inferencing what they're doing.

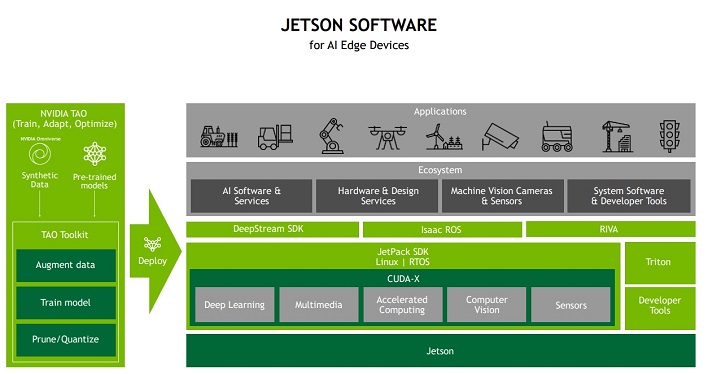

NVIDIA's Software Development Platform

Jetpack, which is the SDK and libraries used to speed up AI and robotics development, is just one part of NVIDIA's toolkit. Still, it's the most public-facing of its tools and the one that developers will likely be most interested in. It runs on the latest CUDA 11.0 and TensorRT 8.0 platforms and takes full advantage of NVIDIA's Ampere GPU architecture. The toolkit is running Ubuntu Linux 20.04 LTS with Linux kernel 5.10 and a secure hardware environment that includes OP-TEE, the trusted execution environment, and full-disk encryption. That means if your Jetson hardware is stolen, your secrets should be safe unless the thief can log into your account.

NVIDIA knows that its pre-trained AI models aren't a one-size-fits-all proposition, and so about the same time that Jetson AGX Xavier came out, NVIDIA created TAO, or Train-Adapt-Optimize. TAO is NVIDIA's framework for modifying its trained AIs and giving developers a way to first begin training in a proof-of-concept model on the Jetson AGX Orin, and then deploy those modified models to DeepStream, NVIDIA's SDK for deploying Vision AI applications on both Jetson and as services to the cloud. Developers can also bring their own models from TensorFlow, PyTorch, or ONNX and deploy them to the cloud with DeepStream. TAO also uses NVIDIA's Omniverse Replicator, which can procedurally generate synthetic data to help train models automatically, which NVIDIA says will reduce the time investment required to get an AI ready for a production environment.

Other parts of the development platform each have their own place, too. A robot needs an operating system, and that system is NVIDIA ROS. Included with the Jetson AGX Orin kit are NVIDIA's Isaac ROS GEMs. The GEM package is full of hardware-accelerated libraries that make it easy for developers to implement common actions like movement on NVIDIA hardware. This works hand-in-hand with Fleet Command, NVIDIA's cloud service for deploying, managing, and scaling AI applications. It can provide OTA updates to in-service robots and cloud applications alike along with deep monitoring and analytics functionality.

All of this software works together to help developers quickly create or re-purpose existing AI models, test them in a development environment, and ultimately deploy them either as part of individual service robots or in the cloud.

Jetson AGX Orin Conclusions

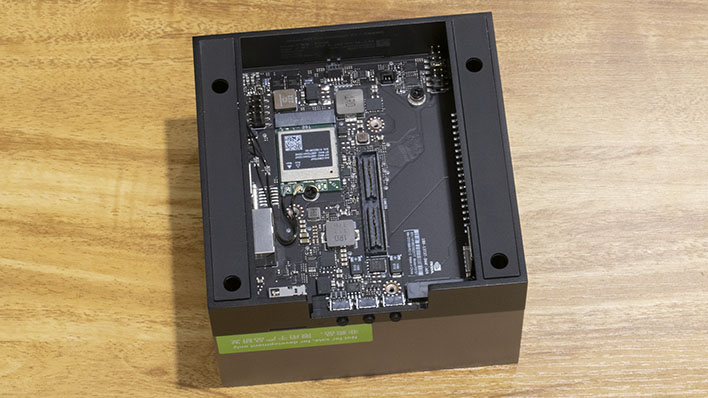

It's fair to say that in our time with the NVIDIA Jetson AGX Orin developer kit, we've just scratched the surface of the current state of AI and machine learning. In fact, there are really two products that NVIDIA was showcasing as part of this product's release: the Jetson AGX Orin kit itself, and also DeepStream for training AI models and quickly making inferences released with Jetson AGX Xavier. DeepStream has a whole lot of horsepower available, and it was pretty simple to deploy our examples to Azure. The fact that NVIDIA handles deployment in its tools automatically, rather than making developers wade through buckets of menus on the Azure portal certainly helps a lot.However, our main focus is the Jetson AGX Orin itself. This is a pretty darn capable piece of kit for developers, and since the only way to currently do Jetpack development is with one of these kits, it needs to be. The reason for that is because all the sensor headers you need, and indeed even the physical form factor itself, is present on the dev kit's board where a lot of this just isn't available for PCs. With up to a 60 Watt thermal budget and a load of graphics resources under the hood, we found that doing everything we tried on the device itself worked pretty well in real-time. That's an absolute necessity for an AI-focused application or a service robot that needs to navigate a building on its own, and the Orin has plenty of horsepower for it.

The only thing that might give some potential users pause is the entry price. This developer toolkit is available for purchase from distributors for $1,999. Keep in mind, this isn't meant for hobbyists, the Jetson AGX Orin, like Xavier before it, is built for industrial applications and will be just one component in a robot that could cost many multiples of this. From that perspective, the price makes more sense. The good news is that there are dev kits all the way down the pricing bracket to the $70 Jetson Nano 2 GB that we evaluated last year.

We'd encourage newcomers to get their feet wet, and when they have a more practical application that requires all the speed and resources that the Orin platform provides, make a step up. There are a myriad of community projects that can make use of lighter hardware in the meantime, and who knows: if your project is a cut above, you just might win this more powerful setup from NVIDIA yourself. If you know what you're trying to do with this hardware, the Jetson AGX Orin developer kit is excellent and should serve AI-fueled developers, engineers and makers very well.